✍️ By Abhishek Kumar | #FirstCrazyDeveloper

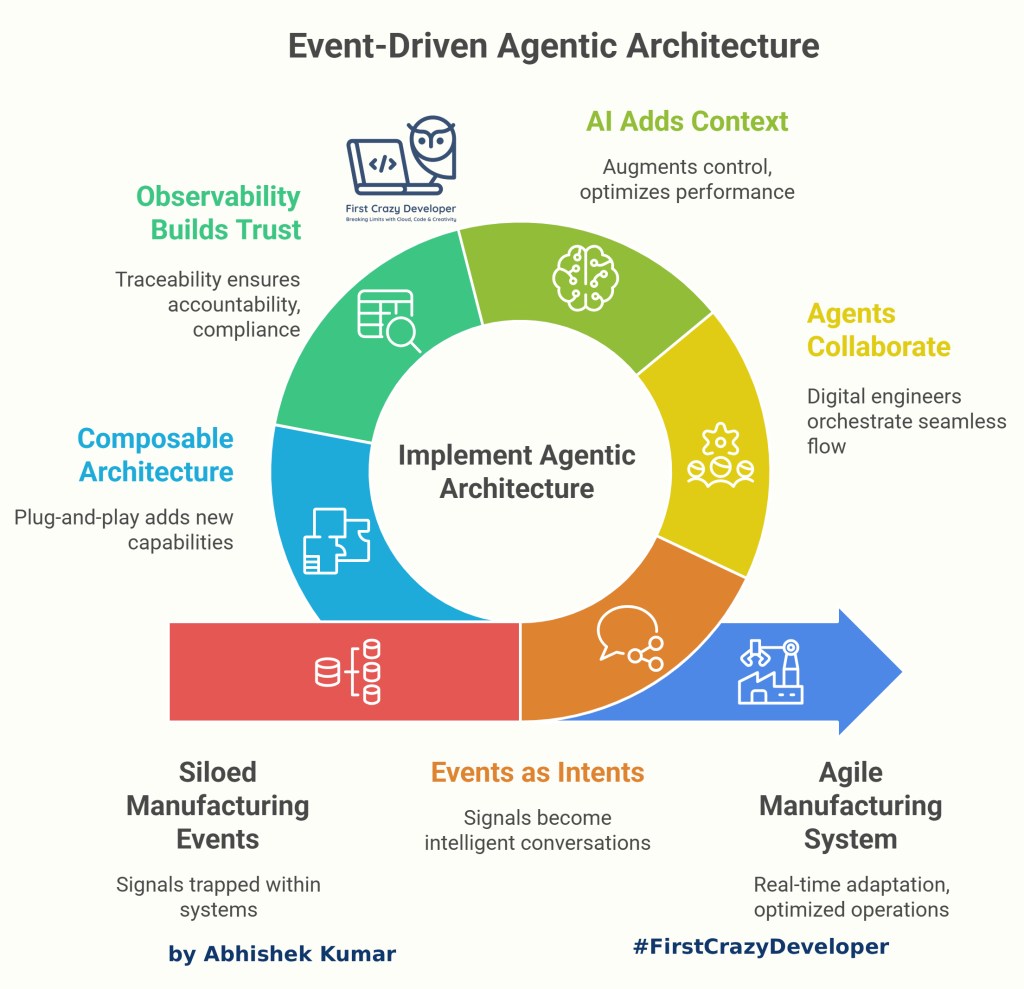

In today’s world of intelligent automation and AI-driven operations, event-driven agentic systems are becoming the architectural backbone for enterprises integrating AI, microservices, and human workflows. These systems are not just reactive—they are autonomous, adaptive, and context-aware, capable of executing tasks intelligently across diverse environments.

This blog explores the transformative potential of event-driven agentic architecture in modern time. It argues that by treating events as intents and leveraging intelligent agents, manufacturing systems can achieve unprecedented agility, collaboration, and contextual awareness. The document outlines the key principles of this architecture, including the role of events as a nervous system, the collaborative nature of agents, the strategic integration of AI, the importance of observability, and the composable nature of the future manufacturing landscape.

🧠 Events Become the Nervous System

In the realm of modern manufacturing, a multitude of events occur continuously. These events, such as a pump starting, a batch completing, a temperature exceeding its limit, or a label being printed, represent valuable signals. However, in traditional setups, these signals often remain confined within systems like PLCs, SAP, or MES, awaiting manual data polling or the triggering of predefined workflows.

The event-driven agentic architecture revolutionizes this paradigm by transforming each signal into an intelligent conversation across systems. This conversation is characterized by its contextual awareness, autonomy, and explainability. When a machine’s vibration spikes or a new process order arrives from SAP, it’s no longer just data; it’s an intent.

Agents, acting as intelligent entities, can consume these events, make rapid decisions, enrich the context, and respond accordingly, all within milliseconds. This decoupling from rigid APIs empowers manufacturing systems with unparalleled agility, enabling them to adapt swiftly to changing conditions and optimize operations in real-time.

✨ Agents Bring Human-Like Collaboration

Imagine agents as your digital engineers, each possessing specialized knowledge and skills. Each agent knows its designated task, whether it’s monitoring, analyzing, deciding, or executing. Together, these agents orchestrate a seamless flow, mirroring the collaboration of a well-coordinated team.

Consider the following example:

- The Anomaly Agent: Detects anomalies in mixer vibrations, identifying potential issues before they escalate.

- The Parts Agent: Checks SAP inventory to ensure the availability of necessary components for maintenance or production.

- The Work Order Agent: Raises a ticket to initiate maintenance or repair procedures based on the detected anomalies and parts availability.

- The Summary Agent: Leverages Azure OpenAI to generate a human-readable maintenance note, providing clear and concise instructions for technicians.

This entire process is seamlessly orchestrated through an Event Mesh, such as Kafka or Azure Event Grid, ensuring efficient and reliable communication between agents.

💡 AI Adds Context, Not Chaos

The true magic unfolds when Large Language Models (LLMs), such as GPT-4o or Azure OpenAI, are strategically integrated into the workflow to enhance context and provide valuable insights. These AI models are deployed only where they can add significant value, such as summarizing events, explaining anomalies, or suggesting optimal next actions.

It’s crucial to emphasize that the core of the system remains deterministic. AI doesn’t replace control; instead, it augments it by providing additional context and intelligence. This ensures that the system operates reliably and predictably while leveraging the power of AI to optimize performance and decision-making.

🧱 Observability Builds Trust

In the manufacturing industry, traceability is paramount. Every action must be auditable, with a clear explanation of why a label was printed, why a mixer stopped, or why a batch was requeued.

By combining OpenTelemetry with event logs and audit trails, we can create trustable AI systems that can be verified at any time. This transparency and accountability are essential for building confidence in the system and ensuring compliance with regulatory requirements.

✨ The Future Is Composable

The composable nature of this architecture is particularly exciting. Once a factory operates on events and agents, adding new capabilities becomes a simple plug-and-play process.

For example:

- Quality Inspection via Vision AI: Add a “Vision Agent” to perform automated quality checks using computer vision.

- Supplier Updates: Subscribe to a “SupplyChain.EventMesh” to receive real-time updates from suppliers.

- Sustainability Insights: Connect energy meters via IoT agents to monitor energy consumption and identify opportunities for optimization.

This composability represents the true essence of Industry 4.0 – not just digitalization, but the seamless integration of intelligence into the event fabric of manufacturing. It enables manufacturers to adapt quickly to changing market demands, optimize operations, and achieve unprecedented levels of efficiency and sustainability.

Let’s explore the key architectural components, design principles, and challenges that define such systems.

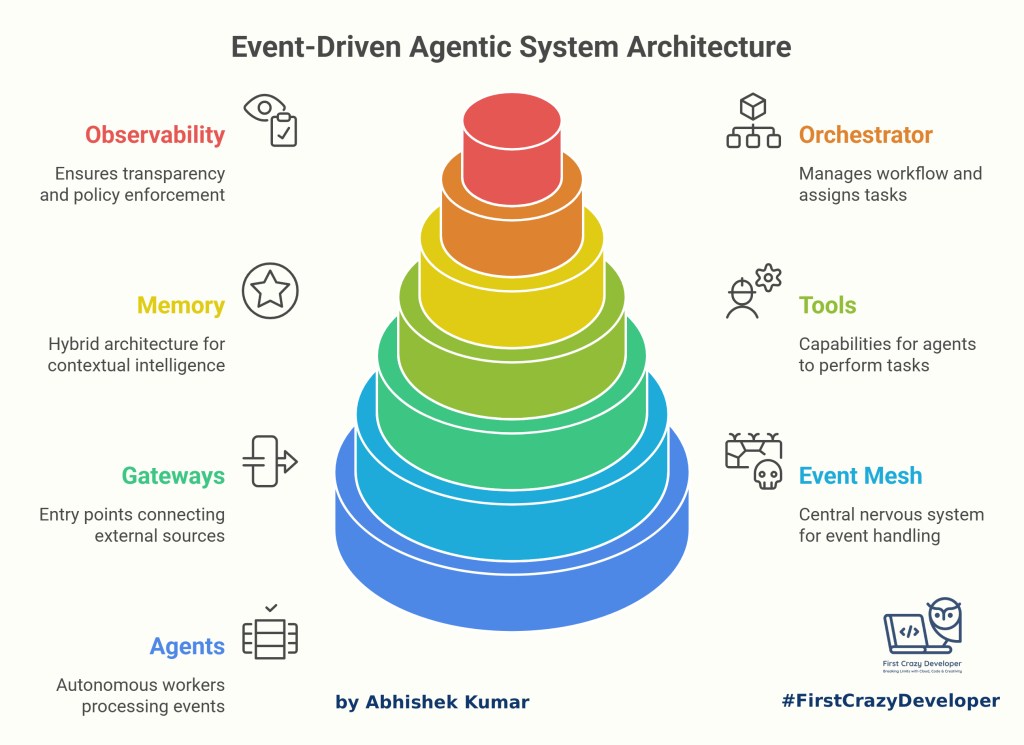

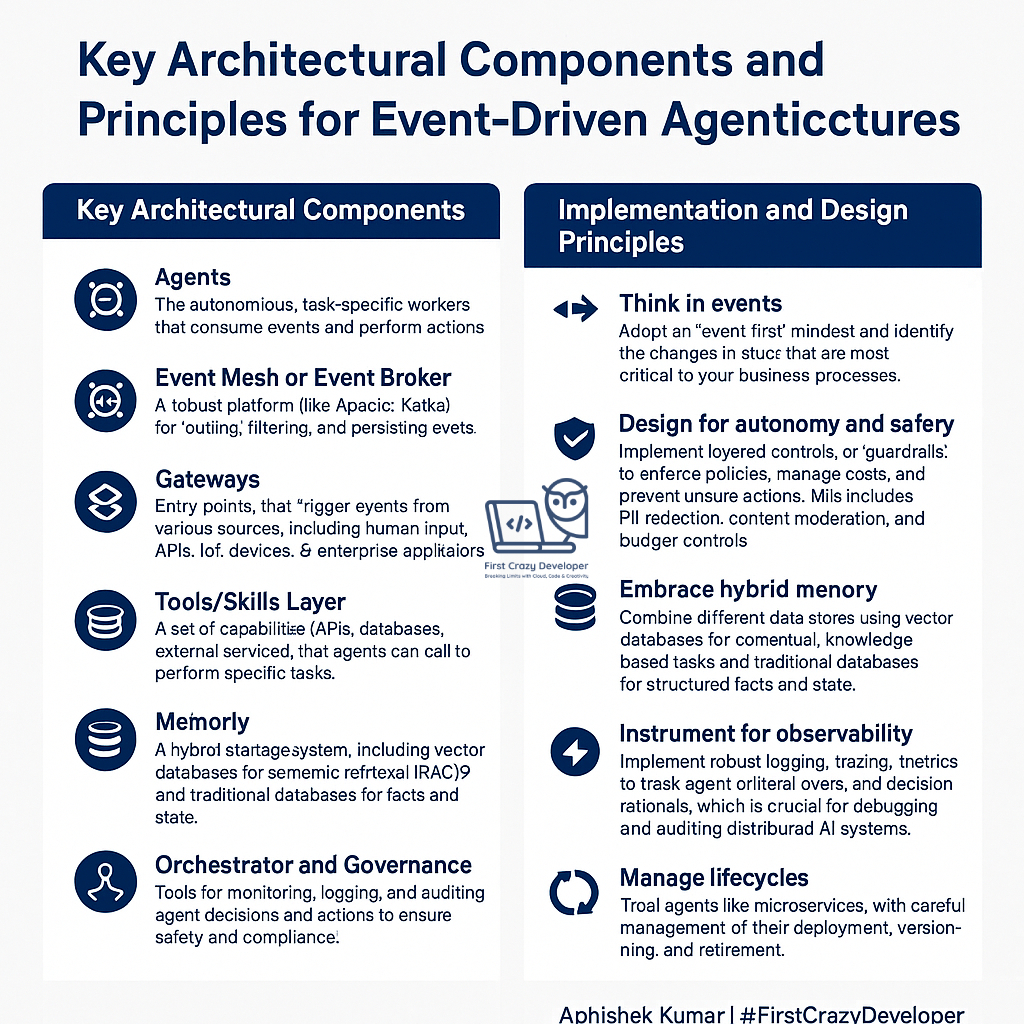

🧩 Key Architectural Components

A reference architecture for an event-driven agentic system typically includes the following layers and components:

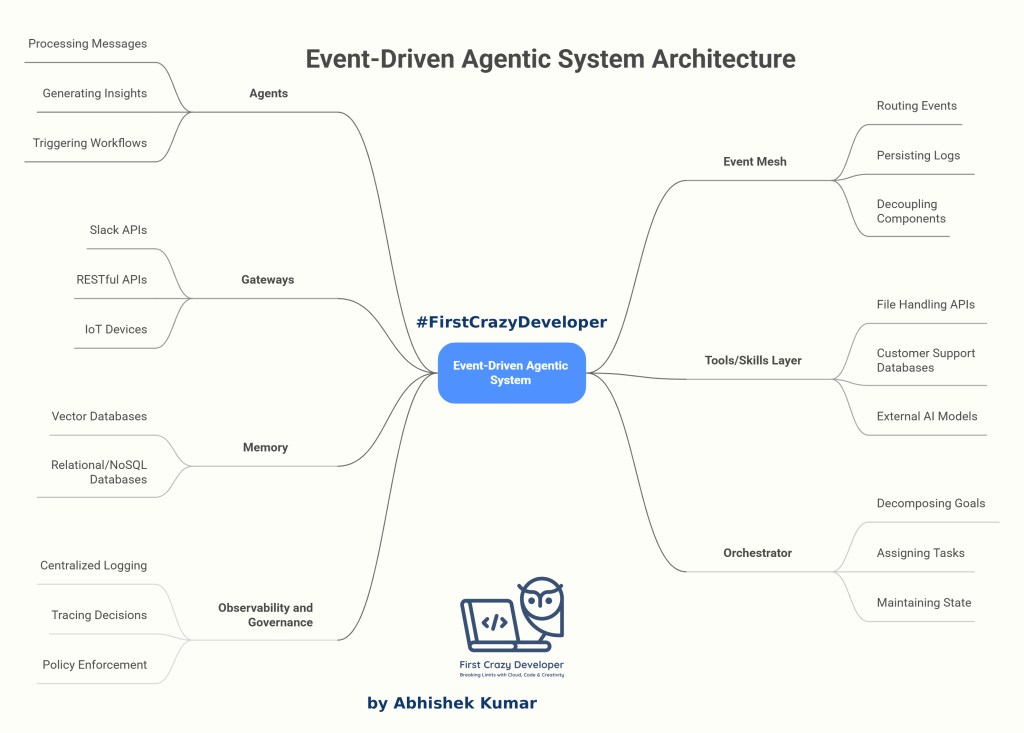

1. Agents

Agents are autonomous, task-specific workers that consume events and act upon them. They are the core execution units within the system. Their responsibilities include:

- Processing messages: Receiving and interpreting event data.

- Generating insights: Deriving meaningful information from the event data.

- Triggering downstream workflows: Initiating subsequent actions based on the processed information.

Each agent operates independently but collaborates through the event mesh to achieve complex business goals. This independence allows for scalability and resilience.

2. Event Mesh / Event Broker

The event mesh is the central nervous system of the architecture, providing a robust platform for handling events. Key functionalities include:

- Routing and filtering events: Directing events to the appropriate agents based on predefined rules.

- Persisting event logs for replay and recovery: Ensuring data durability and enabling the system to recover from failures.

- Decoupling event producers and consumers: Allowing components to operate independently without direct dependencies.

Popular event mesh technologies include Apache Kafka, Azure Event Grid, and Confluent Platform. This decoupling ensures scalability and fault tolerance across distributed environments.

3. Gateways

Gateways act as entry points to the system, connecting external sources and translating their inputs into events. They facilitate integration with:

- Slack APIs: Enabling communication and workflow triggers from Slack.

- RESTful APIs: Providing a standard interface for interacting with other systems.

- IoT devices: Capturing data from sensors and other connected devices.

- Web applications: Allowing users to interact with the system through web interfaces.

- Legacy systems via connectors: Bridging the gap between modern and older systems.

Gateways transform external triggers into well-defined events that enter the Event Mesh, enabling omnichannel and system-wide orchestration.

4. Tools / Skills Layer

This layer provides agents with the capabilities they need to perform their tasks. It encompasses a wide range of resources, including:

- File handling APIs: Allowing agents to process and manipulate files.

- Customer support databases: Providing access to customer information and support resources.

- External AI models like OpenAI, Anthropic, or custom LLMs: Enabling agents to leverage advanced AI capabilities.

Agents dynamically select and invoke these skills to fulfill their assigned tasks, making the system highly adaptable.

5. Memory

A hybrid memory architecture is crucial for enabling contextual intelligence. It combines:

- Vector databases (e.g., Pinecone, Weaviate, Azure AI Search): Storing semantic embeddings for Retrieval-Augmented Generation (RAG), allowing agents to retrieve relevant information based on meaning.

- Relational/NoSQL databases: Maintaining structured facts, states, and system context, providing a reliable source of truth.

Together, they enable agents to “remember,” “reason,” and “act” coherently across sessions, improving their overall performance.

6. Orchestrator

The Orchestrator manages the overall workflow, breaking down high-level goals into smaller, manageable steps. Its responsibilities include:

- Decomposing high-level goals into smaller steps: Breaking down complex tasks into simpler sub-tasks.

- Assigning tasks to appropriate agents: Distributing work based on agent capabilities and availability.

- Maintaining the workflow’s state: Tracking the progress of each task and ensuring proper sequencing.

Frameworks like LangGraph, LangChain, or Azure AI Foundry orchestration agents can manage these distributed interactions with built-in control logic and error recovery.

7. Observability and Governance

Enterprise-grade agentic systems require transparency and trust. This layer includes:

- Centralized logging and metrics collection: Providing a comprehensive view of system performance and behavior.

- Tracing of agent decisions: Tracking the decision-making process of each agent.

- Policy enforcement (PII redaction, cost limits, moderation rules): Ensuring compliance with regulations and organizational policies.

Observability ensures that every autonomous decision is explainable, safe, and auditable.

⚙️ Implementation & Design Principles

1. Think in Events

Adopt an “event-first” mindset. Identify critical state changes and design the architecture around these events rather than static APIs.

2. Design for Autonomy and Safety

Autonomy must coexist with governance. Use layered guardrails to manage costs, enforce policies, and prevent unsafe actions. Examples include:

- Role-based access: Limiting access to sensitive resources based on user roles.

- Budget quotas: Setting limits on resource consumption to control costs.

- PII and content moderation filters: Protecting sensitive data and preventing inappropriate content.

3. Embrace Hybrid Memory

Blend vector memory for semantic recall with structured databases for business logic. This dual approach helps agents reason contextually while maintaining factual consistency.

4. Instrument for Observability

Visibility is non-negotiable. Implement:

- Tracing for distributed workflows: Tracking the flow of events and actions across the system.

- Metrics for performance and cost: Monitoring key performance indicators and resource utilization.

- Logs for decision audits: Recording the decisions made by agents for auditing and debugging purposes.

5. Manage Agent Lifecycles

Treat agents like microservices. Version, deploy, and retire them systematically through CI/CD pipelines (e.g., Azure DevOps or GitHub Actions) and container orchestrators like AKS or Docker Swarm.

🚧 Challenges for Architects

1. Non-Deterministic Reasoning

Agents powered by LLMs make probabilistic decisions, leading to non-deterministic results. Architects must implement explainability, deterministic fallback paths, and validation workflows.

2. Governance and Control

Autonomy without oversight can be risky. Introduce transparent observability, policy guardrails, and approvals for critical tasks to maintain trust.

3. Integration with Legacy Systems

Legacy monoliths often lack event interfaces. Architects need to:

- Wrap them with API or event gateways.

- Build adapters for message publishing.

- Gradually evolve toward an event-driven foundation.

🏗️ Real-World Integration Examples

Using the reference architecture above, you can integrate:

- Salesforce or ServiceNow: For workflow triggers and customer relationship management.

- MongoDB or Cosmos DB: For context memory and data storage.

- OpenAI / Anthropic APIs: For reasoning and natural language processing.

- Slack or Web Interfaces: For human-in-the-loop feedback and user interaction.

Each integration enhances the system’s intelligence, autonomy, and reliability.

💬 Abhishek’s Take

In every modern manufacturing setup I’ve seen — whether it’s paint, automotive, or food processing — events are already happening everywhere: a pump starts, a batch completes, a temperature crosses its limit, or a label gets printed.

But traditionally, these signals stayed locked inside systems like PLCs, SAP, or MES, waiting for someone to poll data or trigger manual workflows.

The event-driven agentic architecture changes that story.

It transforms every signal into an intelligent conversation across systems — a conversation that’s contextual, autonomous, and explainable.

🧠 Final Thought

Agentic architectures are not just about automation — they’re about creating systems that think and act responsibly.

For developers, this means designing micro-agents that are reusable and observable.

For architects, it means shaping event meshes that are scalable, safe, and policy-driven.

When you combine the two, you don’t just build software — you build autonomous ecosystems that learn, adapt, and continuously improve factory operations.

Event-driven agentic systems are the next evolution of distributed computing—where events drive intelligence, and agents act with purpose.

By combining Event Mesh, autonomous agents, and governed orchestration, enterprises can unlock the full potential of AI-powered automation—securely, efficiently, and transparently.

The next time your IoT sensor sends an event, imagine it triggering a chain of smart agents quietly working behind the scenes — predicting, optimizing, and improving your plant’s efficiency in real time.

That’s the power of event-driven intelligence.

🚀 Scenario: Predictive Maintenance → Work-Order Automation

Business story (factory floor):

Vibration and temperature sensors on a paint mixer stream readings. When anomalies appear, the system:

- detects the issue,

- checks spare-parts inventory,

- opens a maintenance work order in SAP/CMMS,

- schedules a technician, and

- sends a short LLM-generated incident summary to Teams/Slack with links to manuals (RAG).

All of this happens through the Event Mesh so producers and consumers are decoupled.

🧩High-level architecture (Azure-flavored)

- Gateways:

IoT Hub / MQTT → Edge Gateway → Kafka (or Azure Event Hubs for Kafka API) topiciot.sensor.reading. - Event Mesh / Topics:

iot.sensor.reading(ingress)maintenance.anomaly.detectedmaintenance.workorder.createdagent.audit

- Agents & Tools:

- Anomaly Agent (Python): z-score/ML to detect anomalies

- Parts Agent: checks SAP MM/D365/Maximo via REST/ODATA

- Work-Order Agent: creates order in SAP PM (or any CMMS)

- Summary Agent: uses RAG (vector DB with manuals) + LLM to create short, human-readable summary

- Notifier Agent: posts to Teams/Slack

- Memory:

- Vector DB (Azure AI Search / Weaviate/Pinecone) → equipment manuals, SOPs, last 20 incidents

- Relational (SQL/Cosmos) → asset registry, thresholds, parts SKUs

- Orchestrator:

- Python LangGraph or .NET Durable Functions to fan-out and handle approvals/fallbacks

- Observability/Guardrails:

- OpenTelemetry → Azure Monitor/Grafana

- PII/content filters + token budgets for LLM calls

- Cost/timeout policies on external APIs

Event contracts (CloudEvents-style JSON)

iot.sensor.reading

{

"type": "sensor.reading",

"source": "mixer-23/edge-gw-1",

"specversion": "1.0",

"id": "mixer-23-1738700112",

"time": "2025-10-05T19:15:12Z",

"data": {

"assetId": "mixer-23",

"metrics": { "vibrationRMS": 8.2, "tempC": 78.3 },

"unit": { "vibrationRMS": "mm/s", "tempC": "C" }

}

}

maintenance.anomaly.detected

{

"type": "maintenance.anomaly",

"source": "agent.anomaly",

"specversion": "1.0",

"id": "anom-mixer-23-... ",

"data": {

"assetId": "mixer-23",

"severity": "High",

"reason": "Vibration RMS 8.2 (> threshold 6.0) with rising temp",

"features": { "rms": 8.2, "tempC": 78.3 }

}

}

Python: Edge → Kafka (Gateway publisher)

# pip install confluent-kafka fastapi uvicorn pydantic

from fastapi import FastAPI

from pydantic import BaseModel

from confluent_kafka import Producer

import json, os, time

BROKERS = os.getenv("KAFKA_BROKERS","localhost:9092")

TOPIC = "iot.sensor.reading"

producer = Producer({"bootstrap.servers": BROKERS})

app = FastAPI()

class Reading(BaseModel):

assetId: str

vibrationRMS: float

tempC: float

@app.post("/ingest")

def ingest(r: Reading):

evt = {

"type": "sensor.reading",

"source": f"{r.assetId}/edge",

"specversion": "1.0",

"id": f"{r.assetId}-{int(time.time())}",

"data": {"assetId": r.assetId, "metrics": {"vibrationRMS": r.vibrationRMS, "tempC": r.tempC}}

}

producer.produce(TOPIC, json.dumps(evt).encode())

producer.flush()

return {"ok": True}

Python: Anomaly Agent (stream consumer → publish anomaly)

Simple, transparent z-score + thresholds. Swap to sklearn/ONNX later without changing events.

# pip install confluent-kafka numpy

from confluent_kafka import Consumer, Producer

import os, json, numpy as np

from collections import deque

BROKERS = os.getenv("KAFKA_BROKERS","localhost:9092")

IN_TOPIC = "iot.sensor.reading"

OUT_TOPIC = "maintenance.anomaly.detected"

c = Consumer({"bootstrap.servers": BROKERS, "group.id": "anomaly-agent", "auto.offset.reset": "latest"})

p = Producer({"bootstrap.servers": BROKERS})

c.subscribe([IN_TOPIC])

window = deque(maxlen=120) # last N samples per asset (simplified single-asset demo)

def zscore(series):

arr = np.array(series, dtype=float)

return (arr[-1] - arr.mean()) / (arr.std() + 1e-6)

while True:

msg = c.poll(1.0)

if not msg or msg.error():

continue

evt = json.loads(msg.value())

m = evt["data"]["metrics"]["vibrationRMS"]

window.append(m)

if len(window) > 20:

z = zscore(window)

temp = evt["data"]["metrics"].get("tempC", 0)

if m > 6.0 or (z > 3.0 and temp > 70):

anomaly = {

"type": "maintenance.anomaly",

"source": "agent.anomaly",

"specversion": "1.0",

"id": evt["id"] + "-anom",

"data": {

"assetId": evt["data"]["assetId"],

"severity": "High" if m > 8.0 else "Medium",

"reason": f"Vibration {m} (z={z:.2f}) with temp {temp}",

"features": {"rms": m, "tempC": temp}

}

}

p.produce(OUT_TOPIC, json.dumps(anomaly).encode())

p.flush()

Why this agent matters: deterministic logic with transparent thresholds → easy to audit. If you later replace with an ML model, the event contract stays the same.

C#: Parts & Work-Order Agents (Kafka consumer → SAP/CMMS REST)

This worker listens for anomalies, checks parts, and creates a work order. Replace the URLs with SAP PM/D365/Maximo endpoints.

// <PackageReference Include="Confluent.Kafka" Version="2.*" />

// <PackageReference Include="System.Net.Http.Json" Version="8.*" />

using Confluent.Kafka;

using System.Net.Http.Json;

var http = new HttpClient { BaseAddress = new Uri(Environment.GetEnvironmentVariable("CMMS_BASE") ?? "https://cmms/api/") };

var sap = new HttpClient { BaseAddress = new Uri(Environment.GetEnvironmentVariable("SAP_BASE") ?? "https://sap/api/") };

var conf = new ConsumerConfig {

GroupId = "wo-agent",

BootstrapServers = Environment.GetEnvironmentVariable("KAFKA_BROKERS") ?? "localhost:9092",

AutoOffsetReset = AutoOffsetReset.Earliest

};

using var consumer = new ConsumerBuilder<Ignore, string>(conf).Build();

consumer.Subscribe("maintenance.anomaly.detected");

var producer = new ProducerBuilder<string, string>(new ProducerConfig {

BootstrapServers = Environment.GetEnvironmentVariable("KAFKA_BROKERS") ?? "localhost:9092"

}).Build();

while (true)

{

var cr = consumer.Consume();

var evt = System.Text.Json.JsonDocument.Parse(cr.Message.Value);

var data = evt.RootElement.GetProperty("data");

var assetId = data.GetProperty("assetId").GetString();

// 1) Check parts stock

var parts = await http.GetFromJsonAsync<List<Part>>($"parts?assetId={assetId}") ?? new();

var hasBearing = parts.Any(p => p.Sku == "BEARING-6205" && p.Qty > 0);

// 2) Create WO in CMMS/SAP PM

var woReq = new {

assetId,

shortText = "Mixer vibration anomaly",

longText = $"Auto-detected: {data.GetProperty("reason").GetString()}",

priority = "High",

requiredPart = "BEARING-6205",

partAvailable = hasBearing

};

var resp = await http.PostAsJsonAsync("workorders", woReq);

resp.EnsureSuccessStatusCode();

var created = await resp.Content.ReadFromJsonAsync<WorkOrder>();

// 3) Emit follow-up event

var outEvt = new {

type = "maintenance.workorder.created",

source = "agent.workorder",

specversion = "1.0",

id = created!.Id,

data = created

};

await producer.ProduceAsync("maintenance.workorder.created",

new Message<string, string> { Key = created.Id, Value = System.Text.Json.JsonSerializer.Serialize(outEvt) });

}

record Part(string Sku, int Qty);

record WorkOrder(string Id, string AssetId, string Status, string Priority);

Python: Summary Agent (RAG + LLM → Slack/Teams)

Pull prior incidents/manual snippets from the vector index, then ask an LLM to craft a 6-line incident brief for the technician.

# pip install openai slack_sdk

from openai import OpenAI

from slack_sdk.web import WebClient

import os, json

client = OpenAI(api_key=os.environ["AZURE_OPENAI_KEY"], base_url=os.environ["AZURE_OPENAI_ENDPOINT"])

slack = WebClient(token=os.getenv("SLACK_BOT_TOKEN"))

def rag_snippets(asset_id): # pretend vector search

return ["Refer to mixer-23 bearing replacement SOP step 4–9.",

"Past incident (2025-07): similar vibration; bearing worn."]

def summarize_and_notify(event):

data = event["data"]

snippets = "\n".join(rag_snippets(data["assetId"]))

prompt = f"""Create a short maintenance brief:

Asset: {data['assetId']}

Anomaly: {data.get('reason')}

Context:\n{snippets}

Format: Title, 3 bullet points, Recommended action, Safety note."""

msg = client.chat.completions.create(model="gpt-4o-mini", messages=[{"role":"user","content":prompt}]).choices[0].message.content

slack.chat_postMessage(channel="#maint-ops", text=msg)

# wire this to consume maintenance.workorder.created and call summarize_and_notify(...)

Guardrails: truncate prompts, redact any PII, cap tokens, log the completion usage to a cost dashboard.

Orchestration options

- LangGraph (Python): route

maintenance.anomaly.detected→ parallel Parts and Summary; wait for parts; if not available, open Procurement Task; continue to Work-Order Agent. - Durable Functions (.NET): orchestrator function awaits

PartsActivity+SummaryActivity(fan-out/fan-in) →CreateWorkOrderActivity→NotifyActivity. Human approval path if severity = High and confidence < 0.8.

What devs & architects learn from this pattern

- Event contracts first → agents stay loosely coupled and easy to replace.

- Deterministic first, AI second → anomaly rules are auditable; LLMs add communication & decision help.

- Hybrid memory → manuals/incidents in vectors; inventory/state in relational.

- Governance from day one → traces, budgets, retries, DLQs.

- Composable integrations → swap CMMS/ERP/LLM vendors with minimal blast radius.

Minimal test script (manual smoke)

Publish a fake reading that should trigger an anomaly:

curl -X POST http://localhost:8000/ingest \

-H "content-type: application/json" \

-d '{"assetId":"mixer-23","vibrationRMS":8.7,"tempC":79.1}'

You should see:

maintenance.anomaly.detected→maintenance.workorder.created→- Slack message with a concise summary + SOP links.

🚀 Scenario: Label Print Triage

SAP (Saturn) emits a Process Order Created event → Event Mesh → Orchestrator fans out to agents:

- Data Enricher Agent pulls BOM & regulatory text

- Template Selector Agent chooses the Loftware label

- Print Agent fires Automation/Control Center job

- Human-in-the-loop via Slack when confidence is low

Memory combines vector recall (RAG) for sentences with facts/state in SQL.

Observability logs every decision for audit.

🧩 Why this architecture matters (and what it unlocks)

- Resilience & speed: producers and consumers are decoupled via the Event Mesh—SAP can publish once, any number of agents react.

- Explainability & audit: decisions are evented, logged, and replayable. You can prove why a label was printed.

- Autonomy with safety: agents act on their own, but guardrails (PII redaction, cost limits, policy checks) keep them inside the lines.

- Composable skills: add a new tool (e.g., HazChem DB) without touching SAP or Loftware.

- Future-proof: swap LLM vendors (OpenAI/Anthropic/custom) without rewriting the system—only the LLM tool changes.

1) Gateways (ingress)

Turn external triggers (SAP IDoc, REST, Slack) into events.

Python – FastAPI gateway → Kafka

# pip install fastapi uvicorn confluent-kafka pydantic

from fastapi import FastAPI

from pydantic import BaseModel

from confluent_kafka import Producer

import json, os

app = FastAPI(title="Ingress Gateway")

producer = Producer({"bootstrap.servers": os.getenv("KAFKA_BROKERS", "localhost:9092")})

TOPIC = os.getenv("TOPIC", "label.intake")

class ProcessOrder(BaseModel):

po_id: str

plant: str

material: str

quantity: float

priority: str = "Normal"

@app.post("/sap/process-order")

def ingest_po(po: ProcessOrder):

evt = {

"type": "po.created",

"specversion": "1.0",

"source": "sap.saturn",

"id": po.po_id,

"data": po.dict(),

}

producer.produce(TOPIC, json.dumps(evt).encode("utf-8"))

producer.flush()

return {"ok": True, "published_to": TOPIC}

C# – Minimal API gateway → Kafka

// <PackageReference Include="Confluent.Kafka" Version="2.*" />

var builder = WebApplication.CreateBuilder(args);

var app = builder.Build();

using var producer = new ProducerBuilder<string, string>(

new ProducerConfig { BootstrapServers = Environment.GetEnvironmentVariable("KAFKA_BROKERS") ?? "localhost:9092" }

).Build();

app.MapPost("/sap/process-order", async (ProcessOrder po) =>

{

var evt = new {

type = "po.created",

specversion = "1.0",

source = "sap.saturn",

id = po.po_id,

data = po

};

await producer.ProduceAsync("label.intake",

new Message<string, string> { Key = po.po_id, Value = System.Text.Json.JsonSerializer.Serialize(evt) });

return Results.Ok(new { ok = true });

});

app.Run();

record ProcessOrder(string po_id, string plant, string material, double quantity, string? priority);

Why it’s important: Gateways normalize inputs into CloudEvents (or a house schema), giving every downstream consumer a consistent contract.

2) Event Mesh / Broker

Kafka/Event Hubs routes, buffers, and replays events. Create compact topics:

label.intake(ingress)label.enriched(after enrichment)label.print.requested/label.print.completed(actuation lifecycle)agent.audit(governance stream)

Tip: add schema (Avro/JSON-Schema) and compaction for idempotency.

Python – Consumer skeleton

from confluent_kafka import Consumer, KafkaException

import json, os

c = Consumer({

"bootstrap.servers": os.getenv("KAFKA_BROKERS","localhost:9092"),

"group.id": "enricher",

"auto.offset.reset": "earliest"

})

c.subscribe(["label.intake"])

try:

while True:

msg = c.poll(1.0)

if not msg:

continue

if msg.error():

raise KafkaException(msg.error())

evt = json.loads(msg.value())

# hand off to agent (below)

finally:

c.close()

C# – Consumer skeleton

using Confluent.Kafka;

var conf = new ConsumerConfig {

GroupId = "enricher",

BootstrapServers = Environment.GetEnvironmentVariable("KAFKA_BROKERS") ?? "localhost:9092",

AutoOffsetReset = AutoOffsetReset.Earliest

};

using var consumer = new ConsumerBuilder<Ignore, string>(conf).Build();

consumer.Subscribe("label.intake");

while (true)

{

var cr = consumer.Consume();

var evt = System.Text.Json.JsonDocument.Parse(cr.Message.Value);

// hand off to agent (below)

}

Why it’s important: The mesh decouples producers from consumers, enabling scale-out and replay for reliability and debugging.

3) Orchestrator (goal → steps → agents)

For Python, LangGraph is perfect for stateful, branching workflows.

In .NET, Durable Functions is a proven orchestrator.

Python – LangGraph mini-orchestrator

# pip install langgraph langchain-openai

from langgraph.graph import StateGraph, END

from typing import TypedDict, Optional, List, Dict

class FlowState(TypedDict):

po_id: str

enriched: Optional[Dict]

actions: List[str]

decision: Optional[str]

confidence: float

def enrich(state: FlowState) -> FlowState:

# pretend to call SAP/BOM API + RAG lookup

state["enriched"] = {"template":"GHS-CLP-A4", "hazard":"H315", "sentences":["Causes skin irritation."]}

state["actions"].append("enriched")

return state

def decide(state: FlowState) -> FlowState:

# pretend LLM calls with tools; if low confidence, route to human

state["decision"] = "print"

state["confidence"] = 0.86

state["actions"].append("decided")

return state

def act(state: FlowState) -> FlowState:

# call Loftware Automation API

state["actions"].append(f"printed:{state['enriched']['template']}")

return state

g = StateGraph(FlowState)

g.add_node("enrich", enrich)

g.add_node("decide", decide)

g.add_node("act", act)

g.set_entry_point("enrich")

g.add_edge("enrich", "decide")

g.add_edge("decide", "act")

g.add_edge("act", END)

workflow = g.compile()

C# – Durable Functions orchestrator (fan-out/fan-in)

// <PackageReference Include="Microsoft.Azure.WebJobs.Extensions.DurableTask" Version="2.*" />

[FunctionName("LabelOrchestrator")]

public static async Task Run(

[OrchestrationTrigger] IDurableOrchestrationContext ctx)

{

var po = ctx.GetInput<ProcessOrder>();

var enriched = await ctx.CallActivityAsync<dynamic>("EnrichActivity", po);

var decision = await ctx.CallActivityAsync<Decision>("DecisionActivity", enriched);

if (decision.Action == "print")

await ctx.CallActivityAsync("PrintActivity", new { po.po_id, enriched.Template });

await ctx.CallActivityAsync("AuditActivity", new { po.po_id, decision });

}

public record ProcessOrder(string po_id, string plant, string material, double quantity);

public record Decision(string Action, double Confidence);

Why it’s important: The orchestrator owns state & branching, so agents stay single-purpose and swappable.

4) Agents (do the work) + Tools/Skills

Each agent wraps one responsibility and uses tools (HTTP, DB, LLM) to do it.

Python – “Template Selector” agent with tools

import requests, os

def get_templates(material: str) -> list[str]:

# pretend: query Loftware or internal catalog

return ["GHS-CLP-A4", "Transport-Label-100x150"]

def rag_lookup(hazard_code: str) -> list[str]:

# pretend: vector search call (see Memory section)

return ["Causes skin irritation.", "Wear protective gloves."]

def select_template(evt) -> dict:

templates = get_templates(evt["data"]["material"])

sentences = rag_lookup("H315")

# simple heuristic + LLM could refine

return {"template": templates[0], "sentences": sentences}

C# – Agent with Semantic Kernel tool call

// <PackageReference Include="Microsoft.SemanticKernel" Version="1.*" />

using Microsoft.SemanticKernel;

using Microsoft.SemanticKernel.Connectors.OpenAI;

var kernel = Kernel.CreateBuilder()

.AddOpenAIChatCompletion("gpt-4o", Environment.GetEnvironmentVariable("OPENAI_API_KEY"))

.Build();

var prompt = @"Given material: {{$material}} and hazards: {{$hazards}},

choose the best label template from: GHS-CLP-A4, Transport-Label-100x150.

Return JSON {""template"":""..."",""reason"":""...""}";

var function = kernel.CreateFunctionFromPrompt(prompt);

var result = await kernel.InvokeAsync(function, new() {

["material"] = "Resin-1234",

["hazards"] = "H315"

});

Console.WriteLine(result.GetValue<string>());

Why it’s important: Clear single-purpose agents make testing, scaling, and rollback straightforward.

5) Memory (Hybrid: vectors + facts/state)

- Vector store (RAG): store regulatory sentences, SDS snippets, prior label rationales.

- Relational/NoSQL: store label print status, PO → Template mapping, audit.

Python – Azure OpenAI embeddings + FAISS (local)

# pip install faiss-cpu openai tiktoken

from openai import OpenAI

import faiss, numpy as np, os

client = OpenAI(api_key=os.environ["AZURE_OPENAI_KEY"], base_url=os.environ["AZURE_OPENAI_ENDPOINT"])

EMBED_MODEL = os.getenv("EMBED_MODEL","text-embedding-3-large")

corpus = [

{"id":"H315-1","text":"Causes skin irritation."},

{"id":"P280","text":"Wear protective gloves/protective clothing/eye protection/face protection."}

]

vecs = []

for doc in corpus:

emb = client.embeddings.create(model=EMBED_MODEL, input=doc["text"]).data[0].embedding

vecs.append(np.array(emb, dtype="float32"))

index = faiss.IndexFlatL2(len(vecs[0]))

index.add(np.vstack(vecs))

def rag_search(query, k=2):

qv = client.embeddings.create(model=EMBED_MODEL, input=query).data[0].embedding

D,I = index.search(np.array([qv], dtype="float32"), k)

return [corpus[i] for i in I[0]]

C# – Azure AI Search (vector) upsert + query

// <PackageReference Include="Azure.Search.Documents" Version="11.*" />

using Azure;

using Azure.Search.Documents;

using Azure.Search.Documents.Indexes;

using Azure.Search.Documents.Models;

var endpoint = new Uri(Environment.GetEnvironmentVariable("AZURE_SEARCH_ENDPOINT")!);

var key = new AzureKeyCredential(Environment.GetEnvironmentVariable("AZURE_SEARCH_KEY")!);

var client = new SearchClient(endpoint, "regulatory-text", key);

// Upsert (assumes index has vector fields configured)

await client.MergeOrUploadDocumentsAsync(new[] {

new { id="H315-1", text="Causes skin irritation.", vector = /* your embedding float[] */ new float[]{ /* ... */ } }

});

// Vector query

var options = new SearchOptions {

VectorSearch = new() { Queries = { new VectorizedQuery(yourQueryEmbedding){ KNearestNeighborsCount = 3, Fields = { "vector" } } } }

};

var results = client.Search<SearchDocument>("", options);

Why it’s important: Context + facts beat hallucination. RAG grounds answers; SQL/NoSQL ensures consistent state.

6) Observability & Governance

- OpenTelemetry for traces/metrics/logs.

- Policy filters for PII, cost, and content moderation.

- Audit stream (

agent.audit) for every decision.

Python – OpenTelemetry quick start

# pip install opentelemetry-sdk opentelemetry-exporter-otlp

from opentelemetry import trace

from opentelemetry.sdk.trace import TracerProvider

from opentelemetry.sdk.trace.export import BatchSpanProcessor

from opentelemetry.exporter.otlp.proto.grpc.trace_exporter import OTLPSpanExporter

tp = TracerProvider()

tp.add_span_processor(BatchSpanProcessor(OTLPSpanExporter(endpoint=os.getenv("OTLP_EP","http://localhost:4317"))))

trace.set_tracer_provider(tp)

tracer = trace.get_tracer("agent.print")

with tracer.start_as_current_span("print-label") as span:

span.set_attribute("po.id", "PO123")

span.set_attribute("template", "GHS-CLP-A4")

C# – Cost guardrail (simple)

var maxTokensPerRun = 2000;

var used = 0;

string AskLLM(string prompt)

{

var tokens = prompt.Length / 3; // rough estimate

if (used + tokens > maxTokensPerRun) throw new InvalidOperationException("Budget exceeded");

used += tokens;

// call provider...

return "ok";

}

Why it’s important: Without observability, distributed AI feels “magic.” With it, you get trust, debuggability, and compliance.

7) Managing lifecycles (treat agents like microservices)

- Package each agent as a container with a health endpoint.

- Version via tags (

agent-print:v1.4.2). - Roll out with blue/green on AKS.

- Wire CI/CD (ADO/GitHub Actions) to run unit tests + contract tests (schema-compat).

GitHub Actions – containerize & push (snippet)

name: build-agent

on: [push]

jobs:

docker:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v4

- uses: docker/setup-buildx-action@v3

- uses: docker/login-action@v3

with:

registry: ghcr.io

username: ${{ github.actor }}

password: ${{ secrets.GITHUB_TOKEN }}

- run: docker build -t ghcr.io/firstcrazydeveloper/agent-print:${{ github.sha }} .

- run: docker push ghcr.io/firstcrazydeveloper/agent-print:${{ github.sha }}

Putting it together (message flow)

- Gateway receives

/sap/process-order→ publishespo.createdtolabel.intake. - Orchestrator consumes, calls Enricher Agent (BOM/regulatory via RAG), Decision Agent (LLM with guardrails).

- If confidence ≥ threshold → Print Agent hits Loftware Automation API and emits

label.print.*. - If confidence < threshold → Slack Approval (human-in-the-loop) publishes

label.approvedback to mesh. - Audit spans + events written to

agent.auditand your SIEM.

Integration with legacy systems (the tricky parts & how to win)

- Wrap SAP/Loftware with idempotent adapters that publish/consume events.

- Use outbox pattern in services that must update DB + publish exactly once.

- Gradually “event-enable” monolith components behind gateways while keeping old APIs alive.

#EventDrivenArchitecture #AIagents #Azure #Kafka #ManufacturingAI #IoT #OpenAI #Anthropic #AgenticAI #SystemDesign #LangGraph #AzureFunctions #AbhishekKumar #FirstCrazyDeveloper

Leave a comment